I fix AI adoption, train developers, and build the rest.

Most AI rollouts stall at the human layer, not the tech. I come in, find what's blocking adoption, set up the workflows and tooling that make it stick, train your developers to run them well, and build custom systems when nothing off the shelf will do.

How I can help

Consult, train, or build — depending on what you need.

AI tools were rolled out. Adoption stalled.

Implementation consulting

I come into your company, diagnose why AI adoption isn't working, and build the setup that fits your stack — together with you. Workflows, tooling configuration, and the team rituals that keep working long after I leave.

Your developers need to work with AI, not just hear about it.

AI & developer training

Educational workshops in whatever format fits — half-day deep dives, multi-week programmes, online or on-site. Hands-on with Claude Code, Cursor, GitHub Copilot on real codebases. Commissioned by Cegos Integrata, Nobleprog, and other L&D providers.

You need it built — not just spec'd.

Custom development

Complete products from concept to production. AI systems, real-time applications, and full-stack web apps — business problem first, then code.

Why I deliver results

Strategic depth meets technical precision

Most AI initiatives stall at the human layer, not the technology. My background sits at that intersection — understanding how teams actually adopt new tools, and the engineering it takes to build them. That's why the systems I deliver get used, not just shipped.

Psychology BSc

How people actually adopt new tools

Trained in how teams actually adopt — or quietly reject — new tools. The reason adoption stalls is rarely technical, and treating it as such is the most common mistake.

Management MA

Business and engineering, both fluent

Fluent in both business goals and engineering trade-offs. I translate between CFO concerns and architecture decisions, so nothing important gets lost in handoff.

Co-Founder & CTO, The Happy Beavers

6 years scaling systems and teams

Six years scaling systems and leading teams at The Happy Beavers — automating 80% of internal operations and shipping production software end to end.

SDW scholar

Top 2% nationally, three years running

Triple-recipient of the Stiftung der Deutschen Wirtschaft scholarship — awarded to the top 2% of students nationally for academic and leadership excellence.

Selected projects

Complete systems shipped, from concept to production, serving real users

Tymeslot

Open-source scheduling with zero double-bookings across Google, Microsoft, and CalDAV calendars. Built with Elixir/Phoenix.

Transvexis

Specialised translation preserving voice and style across 39+ languages. 40–60% reduction in post-editing time vs. DeepL.

In A Nutshell

AI-powered semantic search for long-form YouTube videos. Ask questions, get answers with exact timestamp citations.

About me

AI Coding Trainer & Software Engineer

I'm Luka Breitig, an AI Coding Trainer & Software Engineer. I train engineering teams on Claude Code, Cursor and agentic AI workflows — and build production software in Elixir/Phoenix and Python. Dual background in Psychology and Management combined with hands-on engineering. Three production systems live and serving real users.

"Technology only works when people trust it. Whether I'm building a product, consulting on adoption, or training a team — I start with understanding the people, not just the code."

Blog

Practical insights on AI technologies and real-world applications

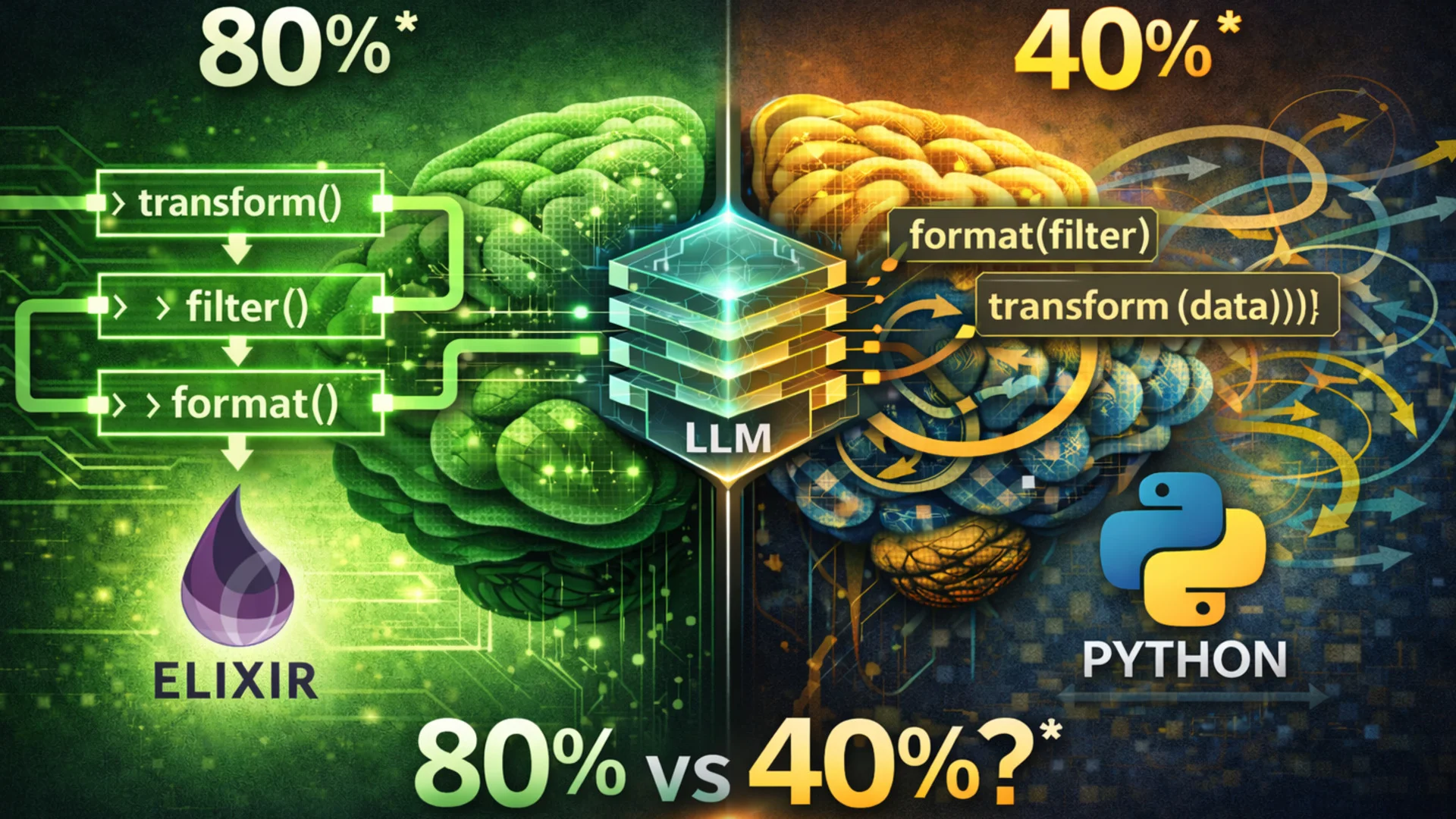

Do LLMs really write better Elixir than Python?

A Tencent benchmark ranked Elixir #1 for LLM code generation. As an Elixir developer, I dug into the data — the truth is more nuanced than the headline, but the structural advantages are real.

From chatbot to agent: a step-by-step mental model for understanding agentic AI

Moving from conversational mimicry to autonomous execution: understand how agentic AI systems pursue complex goals, use tools, and adapt to changing environments through a continuous reasoning loop.

Insights from the field and production-grade AI development

To the blogWondering if I'm your person?

Ten quick questions in, you'll know whether I can actually help — and how.

Frequently asked questions

Common questions about AI adoption, consulting engagements, and how the work fits together

A typical engagement runs in three phases. First I embed with your team — observing real workflows, current tool usage, and where the friction actually sits. Then I design and configure the setup: agent and tooling configuration, prompts and playbooks, and the integrations that make the workflow practical for your stack. Finally, I work alongside your developers in pair sessions, code reviews, and iteration cycles until the new practices stick. Scope is agreed per outcome, not per hour.

We agree on baseline metrics before the engagement starts — typically PR cycle time, time-to-first-commit on new tasks, share of pull requests touched by AI tools, and developer satisfaction. After the workflows are embedded, we re-measure. The goal is a defendable shift you can show your CFO, not a vibes-based 'it feels faster'. If the numbers do not move, we have a problem to solve together rather than a result to celebrate.

Training is educational: workshops, hands-on sessions, and multi-day programmes that teach your developers how to use AI coding tools effectively. It is the right fit when the gap is primarily knowledge. Consulting goes further: I diagnose why adoption is not sticking, design the workflow and tooling setup, and embed it with your team over several weeks. Often the answer is both — first set up the system, then train the wider organisation on how to use it well.

It is almost never the technology. The recurring patterns are tools rolled out without configuration for the actual codebase, no clear guidance on when to use AI versus write by hand, no shared prompts or playbooks, and no follow-through after the initial training session. My psychology background combined with hands-on engineering means I work on both layers — the human one and the technical one — and diagnose the real bottleneck before suggesting solutions.

That is the whole point. I leave behind documented playbooks, configured tooling (CLAUDE.md, agent configurations, prompt libraries), and at least one internal champion who can extend the setup. The success criterion is not 'Luka is still in the building' — it is that productivity gains compound after I am gone. Top-up training and ad-hoc consulting are available when something genuinely new comes up, but they are optional, not built-in dependencies.

Off-the-shelf tools are excellent for general tasks. Custom AI matters when you need deep integration with your stack, use of proprietary data, or enforcement of complex business rules. The systems I build automate multi-step workflows — syncing CRM data, running inventory checks, routing approvals — which a chatbot or simple API wrapper cannot do. The decision usually comes down to whether the workflow is your competitive edge or just a productivity helper.

Have a specific question about your AI project?